What is Microsoft’s Phi-Silica: The Phi Family History

Phi-Silica (announced May 21, 2024)

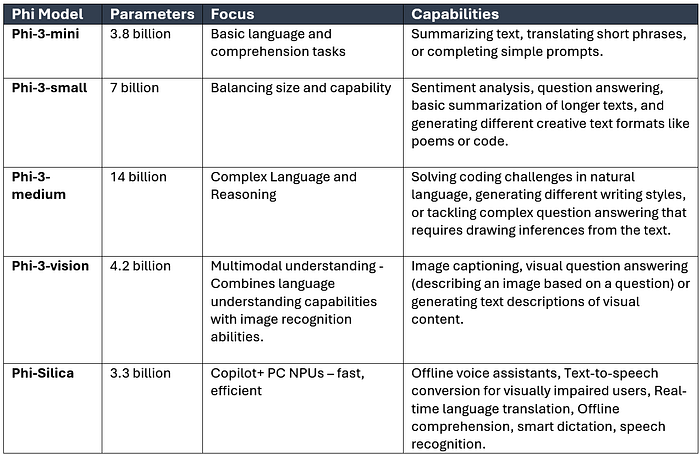

On May 21, 2024, Microsoft announced a new, more miniature Small Language Model (SLM), Phi-3-Silica, designed explicitly for Copilot+ PCs. Copilot+ PCs are personal computers with powerful Neural Processing Units (NPUs) for efficiently handling AI tasks. The first Windows-based local SLM, Phi-3-Silica, with only 3.3 billion parameters, is the smallest in the Phi-3 family. It will be part of the next Windows Copilot Runtime.

Significance of Phi-3-Silica

- Fast and Efficient: Phi-3-Silica processes information at 650 tokens per second, making it very fast. Low power consumption (1.5 Watts) ensures it won’t drain battery life or slow down the PC.

- Leverages NPU: Phi-3-Silica utilizes the NPU for specific tasks, freeing up the PC’s CPU and GPU for other computations.

- Locally deployed: Phi-3-Silica runs directly on the Copilot+ PC without relying on internet connectivity, potentially improving privacy and responsiveness.

- Benefits for developers: Third-party developers can leverage Phi-3-Silica to create novel and user-friendly applications for the Windows ecosystem.

- Enhanced User Experience: The dynamic duo of Phi-3-Silica and Copilot+ PCs promises to revolutionize user productivity and accessibility, thanks to their AI-powered features.

Possible Use Cases of Phi-3-Silica

There are several potential use cases for Phi-Silica due to its unique characteristics of being lightweight, efficient, and running on-device. The coverage includes improved local productivity, enhanced accessibility, and privacy-conscious applications.

- Offline voice assistants with limited functionality: Perform basic voice commands or answer simple questions locally without sending data to the cloud.

- On-device sentiment analysis: Analyze the tone of emails or documents locally to gain insights without compromising privacy.

- Secure voice search: Search within local files or databases using voice commands processed entirely on the device.

- Text-to-speech conversion for visually impaired users: Phi-Silica could read text aloud on web pages or documents, enhancing accessibility for visually impaired users.

- Real-time captioning for audio and video: Generate captions for media files without requiring internet access, improving accessibility for deaf or hard-of-hearing users.

- Personalized language learning tools: Phi-Silica could provide on-device language learning assistance with features like vocabulary suggestions or real-time translation within learning apps.

- Real-time language translation: Translate documents, captions, or conversations on the fly without an internet connection.

- Offline comprehension: Ensure proper writing even without internet access or summarization of long documents or articles locally, allowing users to grasp key points quickly.

- Smart dictation and speech recognition: Phi-Silica could power dictation software that understands context and corrects errors locally, improving accuracy and speed.

Microsoft’s Phi-3 Family

Phi-3 -Mini, Small, Medium

The Microsoft Phi-3 family is a group of open-source small language models (SLMs) known for their capability and affordability. “Small language models” refers to their size compared to other AI models. Phi-3 models are smaller and require less computational power, making them more accessible for various applications.

What are the different models in the Phi-3 family?

- Phi-3 mini (3.8 billion parameters): The smallest and most lightweight model, ideal for tasks requiring basic language understanding and generation. This instruction-tuned model is trained on diverse communication styles, ensuring it can understand your requests without additional configuration.

- Phi-3 small (7 billion parameters): Offers a balance between size and capability, suitable for a broader range of tasks.

- Phi-3 medium (14 billion parameters): The family’s largest and most powerful model, capable of handling complex language tasks and reasoning problems.

What does make the Phi-3 family special?

- High Performance: Phi-3 models outperform models of similar size and even some larger models on various language tasks like reasoning, coding, and math. It makes them efficient and effective for a range of applications.

- Cost-Effective: Phi-3 models require less computing power due to their smaller size, leading to lower operational costs than larger models.

- Versatility: The Phi-3 family includes models with different parameter sizes (the number of variables used for training) to cater to various needs. Phi-3 mini (3.8 billion parameters), Phi-3 small (7 billion parameters), and Phi-3 medium (14 billion parameters) offer options based on desired performance and resource constraints.

Availability and Use

Microsoft offers Phi-3 models through various channels:

- Microsoft Azure AI Model Catalog: Provides access to the models for deployment in cloud environments.

- Hugging Face: A popular platform for sharing and using machine learning models.

- Ollama: A lightweight framework for running models on local machines for development and testing.

- NVIDIA Nimbatus: Allows deployment of Phi-3 models as microservices with a standardized API.

Phi-3-vision (announced as preview, not generally available)

Phi-3-vision is a new model variant that tackles general visual reasoning tasks and delves into the world of charts, graphs, and tables. This multimodal capability is a boon for developers, offering AI that can interpret diverse information formats.

Imagine the potential — achieving the power of a large language model (LLM) for visual reasoning at a fraction of the cost. Phi-3-vision, with its 4.2 billion parameters, could significantly accelerate AI adoption.

While currently in preview, Phi-3-vision showcases exciting possibilities. Users can ask specific questions about charts or use open-ended inquiries to extract meaning from images. The public release date for Phi-3-vision remains to be announced by Microsoft.